by Gary Citron, PhD.

While writing his historical novel ‘Roots’, Alex Haley travelled back to his ancestral homeland to learn about a certain aspect of his history. However, to get to that aspect, he was required by the tribal chief to listen to their entire history. While writing this blog contributing to our series on assurance, I have a better understanding of why that is.

It helped me recall the associated circumstances of my first experience as a consultant to relay a key message: When starting an assurance review, please remember to let the team get their story out, the first time, without interruption. In other words, listen first. Reflecting upon memories, I would like to share my experience learning that message.

The Call to Action

In 1999, I was deployed to Tokyo by Pete Rose to consult with the Japanese NOC (now JOGMEC). That week in my hotel room I received a call from James Painter at Ocean Energy (OE) to help them implement a risk analysis system. This entailed (1) training their staff on assessment concepts, (2) demonstrating a prospect review process via our ‘surgical theatre’ template of reality checking a prospect characterization and (3) helping implement the central coordination process to compare the prospective apples of one group to the oranges of another via an assurance team.

For a company like OE this was particularly important because of their diverse portfolio, built organically in the USA (from their Flores and Rucks heritage) and from their international opportunities that came in part from their purchase of Meridian Oil.

Their impressive assurance team staff included a stratigrapher with vast international experience from Shell, two very talented reservoir engineers and a relatively senior exploration manager who had the ear of their CEO James Hackett. Mr. Hackett went on to distinguish himself further at Devon Energy, which purchased OE in 2003, and particularly later when he joined Anadarko as their CEO. With their highly trained technical staff, savvy assurance team and executive support, they should be well positioned to succeed. Prediction accuracy success (or failure) would largely depend on assurance execution, and I was hired to assist on assurance design and implementation matters.

Seek First to Understand…

Part of my remit was to attend several of their initial assurance reviews and advise the assurance team on best practice. I found an alarming pattern from those reviews. The technical staff were upset that these talented assurance guys were dissecting their prospects before even hearing about the play context. The feedback I heard from the technical teams was that with the current behaviors by the assurance team the technical teams would be hard pressed to solicit further engagement, much less participate in future reviews.

To paraphrase, ‘what was the point of having them if we could not explain the nature of the opportunity’. That feedback was easy to compile, but hard to share with the assurance team. I suspect they hadn’t heard much criticism before in their careers, where they advanced to key technical and managerial roles rather rapidly.

Fortunately, the feedback was readily accepted (after some disbelief stated along the lines of “really?”), and the team made sure they structured their sessions with the discipline to wait until the key points were established, which then provided a foundation for questions and a critical review of the uncertainty and chance factors associated with key parameters.

The take-away we apply for other clients: assurance teams should issue engagement guidelines to the teams that generate opportunities. Such guidelines describe what (and the sequencing for what) they need to see for a review, and in return, what the assurance team provides.

Results

Overcoming the initial hiccup, OE established a state of prediction accuracy over the next three years that was enviable.

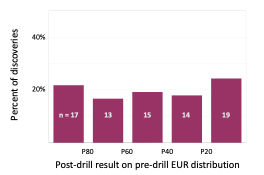

The percentile histogram (Enciso et al, 2003) represents virtually no estimation bias, perfection being a straight line across at 20%. As defined by Otis and Schneiderman (1997) in their landmark paper illustrating how an assurance team can make an impact, percentile histograms show the percent of discoveries that fall in prescribed buckets by measuring where the result for each discovery posts on each prospect file’s success case EUR distribution.

This type of graph facilitates benchmarking against other companies to illustrate thematic estimation problems across the industry. We’ve noted in our prospect risk analysis course that many companies have percentile histograms where more than 40% of their discoveries fall below the forecast P80 of the EUR distribution. The next blog will delve into percentile histogram construction and diagnosis in more detail, so stay tuned.

While there are many attributes to successful assurance efforts (which we will further explore in this blog series), active but patient listening in an assurance review is a good start.

references

Enciso, G., Painter, J. and Koerner, K.R., 2003, Total Approach to Risk Analysis and Post-audit Results by an Independent, Poster presentation to the AAPG International Convention, Barcelona, Spain.

Otis, R., and N. Schneidermann, 1997, A Process for Evaluating Prospects, AAPG Bulletin, v. 81, n. 7, pp. 1087-1109.

About the Author

Gary served on Amoco’s assurance team from 1994 to 1999. In 2001 he became Pete Rose’s first partner in Rose & Associates. He helped create the Risk Coordinators Workshop series in 2008, which remains active.